Let us reflect for a moment. When we think of a person, what comes to mind first? Is it only their face, or also their voice, the way they speak, or perhaps the music playing in the background at the café where you last met them? Most of the time, our memories are not limited to a single snapshot or image. Hearing a song that suddenly brings back childhood memories, or a particular tone of sound that transports us to a place we once visited years ago, suggests that memory is not purely a visual or auditory process.

Our everyday experiences clearly indicate that memory does not operate through a single sensory channel. Remembering is often the outcome of a more holistic process in which multiple senses work together. In this article, I explore why understanding memory as the output of a single sense is insufficient and how multisensory input shapes the way we remember.

Understanding multisensory memory, sensory integration, and memory encoding allows us to see remembering not as a static recording, but as an active and dynamic reconstruction.

Looking At Memory Through Sensory Modalities

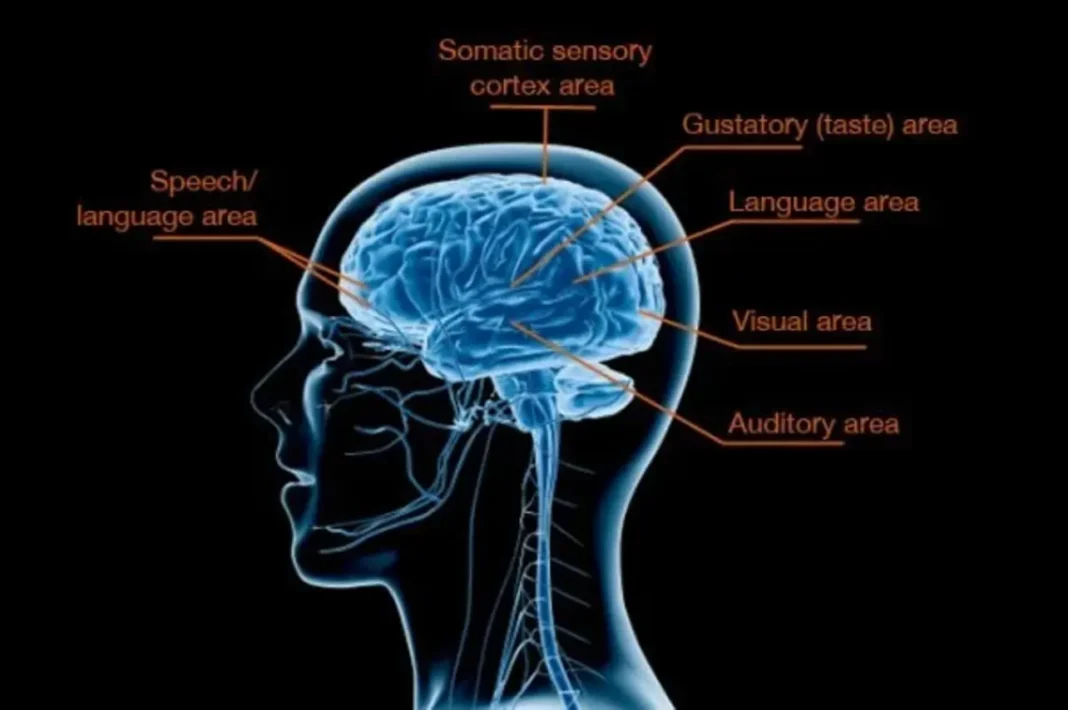

For a long time, memory research has described memory in terms of separate sensory systems. Concepts such as visual memory, auditory memory, or tactile memory have been used to explain how information is represented in the brain. While this framework has been useful for experimental research, it remains limited in its ability to capture real-life experiences.

Events in everyday life are rarely confined to a single sense. Encountering another person does not simply involve seeing their face; it also includes hearing their voice, perceiving the atmosphere of the environment, and integrating all of this information into a meaningful context. Rather than storing these inputs as independent fragments, the brain organizes them through their relationships and shared context.

Viewing memory as a set of isolated sensory compartments therefore offers an incomplete picture of how memory actually functions.

Why Do Multiple Senses Strengthen Memory?

Encoding information through more than one sensory modality provides a clear advantage for remembering. One key reason is the increased variety of retrieval cues available during recall. When access to a visual detail becomes difficult, an auditory cue or a contextual association between different senses may still support remembering. As a result, memory retrieval is no longer dependent on a single pathway but can be approached through multiple routes.

Research supports this view, showing that information presented through multiple senses leaves stronger memory traces. For example, studies have found that learning conditions combining visual and auditory information lead to more accurate and longer-lasting recall compared to unimodal presentations (Shams & Seitz, 2008). These findings suggest that memory is sensitive not only to what type of information is perceived, but also to how information from different senses is integrated.

When the brain receives converging input from multiple sensory systems, it forms richer neural networks. These interconnected representations make memory more durable and more resistant to forgetting.

The Fragility Of Unisensory Learning

Learning that relies on a single sensory channel tends to produce more fragile representations in both attention and memory. One reason for this is that unisensory encoding limits information to a narrower level of representation and reduces opportunities for integration across sensory inputs.

This fragility becomes particularly evident in the context of increasing digital content consumption. On social media platforms, information is often presented as fast, fragmented stimuli targeting a single sensory modality. In this context, the widespread endless scrolling behavior represents a critical point for attention and memory processes. Users are constantly encouraged to shift their attention in anticipation of a new and potentially more engaging stimulus.

This continuous expectation of novelty interferes with sustained processing and makes it difficult to link information across representational levels. As a result, content is stored in memory as disconnected and superficial traces rather than as part of a meaningful whole.

In contrast, experiences that engage multiple senses allow attention to be maintained for longer periods and support the formation of more robust memory representations.

Memory Is Not A Passive Storage System

Viewing memory as a passive storage space overlooks its dynamic nature. Memory is continuously shaped by what we perceive, where our attention is directed, and how we assign meaning to our experiences. An event remains vivid in memory not merely because it is intense, but because it can be recalled in different contexts and reshaped by new experiences.

From this perspective, memory is not the product of a single sense. Memories emerge from the joint processing of what we see, what we hear, what we feel, and the context in which these experiences occur. Multisensory experiences tend to be more durable and more easily accessible.

For this reason, explaining memory solely through questions such as What did we see? or What did we hear? remains insufficient. Memory does not arise from the isolated traces left by individual senses, but from the point at which they intersect.

At its core, remembering is not a snapshot—it is an integration.